One of the most misunderstood terms in computer science is “artificial intelligence”. While many people are familiar with the term artificial intelligence, or its shortened form, AI, they might have a picture of AI in their mind that doesn’t reflect the reality. Sci-fi movies paint a picture of an AI as simply a human-like intelligence that lives in a computer. That’s not entirely accurately.

Today we’re looking a bit more closely at real artificial intelligence initiatives, how they differ from pop culture depictions of AI, and some of the ethical and philosophical questions raised by artificial intelligence technology.

What is Artificial Intelligence?

In simple terms, artificial intelligence refers to intelligence being displayed by machines, in contrast to the natural intelligence displayed by humans and other animals. In the popular conception of an artificial intelligence, the term refers to machines that can mimic natural intelligence features such as problem solving, learning and innovation.

Within the scientific community, there is an ongoing effect known as the “AI effect.” This observation states that any functionality once thought to be “artificial intelligence” that becomes achievable by current-day machines is no longer dubbed AI. For instance, tasks such as understanding human speech, playing games like chess and Go and decrypting written language were all once reserved for “artificial intelligence,” though they are now common computer programs.

In short, as Tesler’s Theorem jokes, “AI is whatever hasn’t been done yet.”

Types of AI

There are three main types of artificial intelligence. These include analytical, human-inspired and, finally, humanized AI. Analytical AI is the simplest, and encompasses things like learning, problem-solving and understanding representations of the world around them. Human-inspired AI are more complex and would involve the understanding and emulation of human emotion. Essentially, these would be “emotionally intelligent” AI.

Finally, humanized AI would most closely resemble the sci-fi incarnation of a human-like intelligence that can think, reason, emote and feel in all the same ways as a human being. Humanized AI, in theory, would be fully self-aware, cognizant, and, essential, would have all of the elements that make natural intelligence aware of their place in the world. This form of AI carries serious philosophical and ethical implications.

Ethics and Philosophy

Humanized AI raises a serious question: is a sufficiently intelligent computer program, one that shows evidence of self-awareness, a person? Should society extend human rights and legal protections to artificial intelligences? How should we react should the artificial intelligence prove hostile, or hold values contrary to those of its creator?

Even deeper than these questions, there are serious philosophical questions about the nature of consciousness. We know we are conscious, or, at least, each individual can know that they are conscious. However, it’s difficult to distinguish a sufficiently well-programmed piece of software from a truly self-aware machine intelligence. How can we know that the program in question is actually experiencing consciousness, not just emulating the signifiers of consciousness we programmed into it?

Reality vs Expectation

The difference in the reality of artificial intelligence and the expectations of them have led to a number of miscommunications between researchers and their funding. Companies and universities funding AI research often expect fully-aware, sentient AI to leap fully-formed from the researchers’ computers, while the researchers are simply making iterative probes into the nature of machine learning and intelligence.

In the short-term, it’s unclear if any of the software we currently have could be defined as “artificial intelligence,” due to the AI effect reclassifying innovations as simple machine processes, not intelligence. In the long-term, we will have a number of decisions to make regarding the future of artificial intelligence, how we as a species deal with machine intelligence, and what rights we extend to apparently self-aware programs.

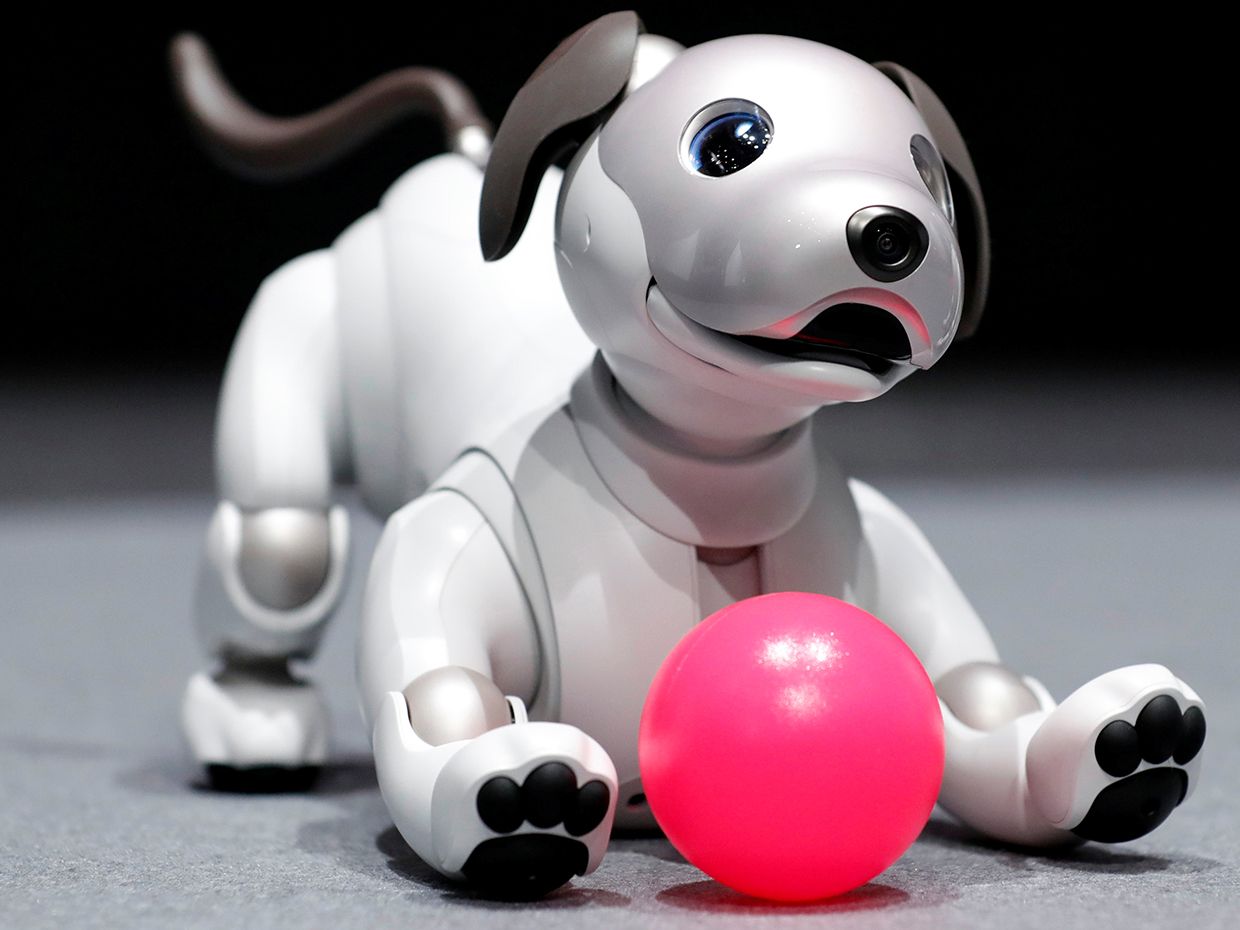

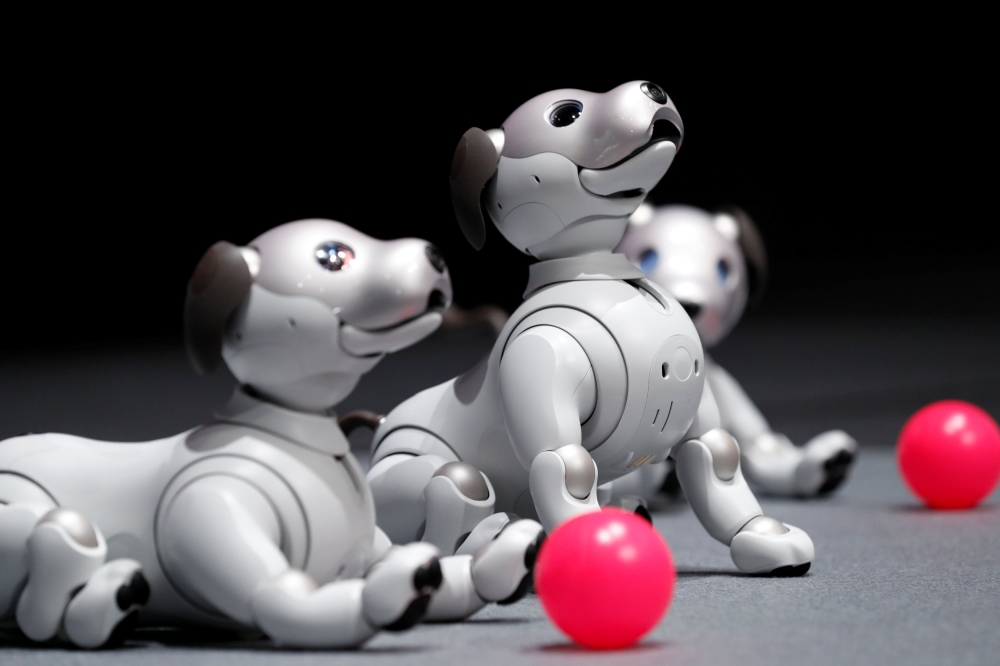

scratches. Aibo is also programmed with learning AI capability so the more time you spend with him, the more he learns about his master. Aibo also will respond to training! Sony’s new hook for Aibo is that you will have to train it through voice commands and positive reinforcement, just like you do a real dog. Aibo has the ability to mimic a real puppy due to the series of sensors and cameras he has which help him to understand both his environment and your interactions.

scratches. Aibo is also programmed with learning AI capability so the more time you spend with him, the more he learns about his master. Aibo also will respond to training! Sony’s new hook for Aibo is that you will have to train it through voice commands and positive reinforcement, just like you do a real dog. Aibo has the ability to mimic a real puppy due to the series of sensors and cameras he has which help him to understand both his environment and your interactions.